- Blog

- Metroid nes rom

- Xforce 3ds max 2010

- Katas de karate

- Maps alicante spain

- The simpsons arcade ps3

- Dragon fable cheat

- Ray lamontagne in concert

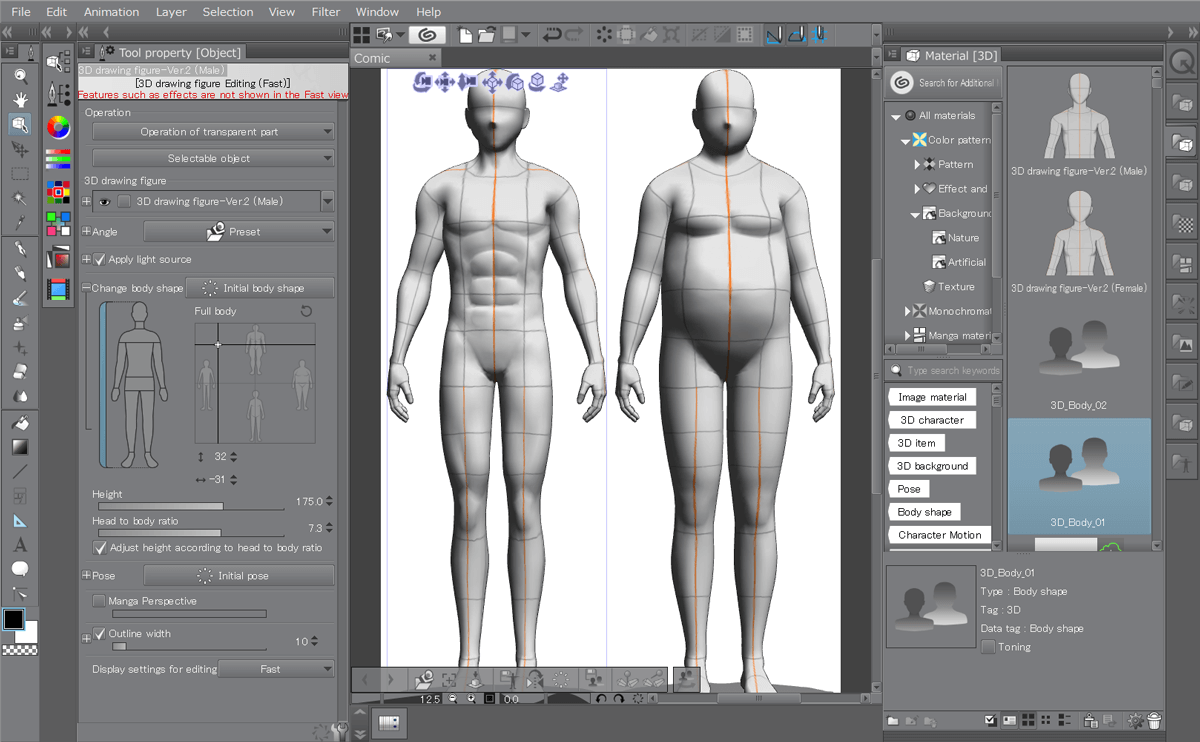

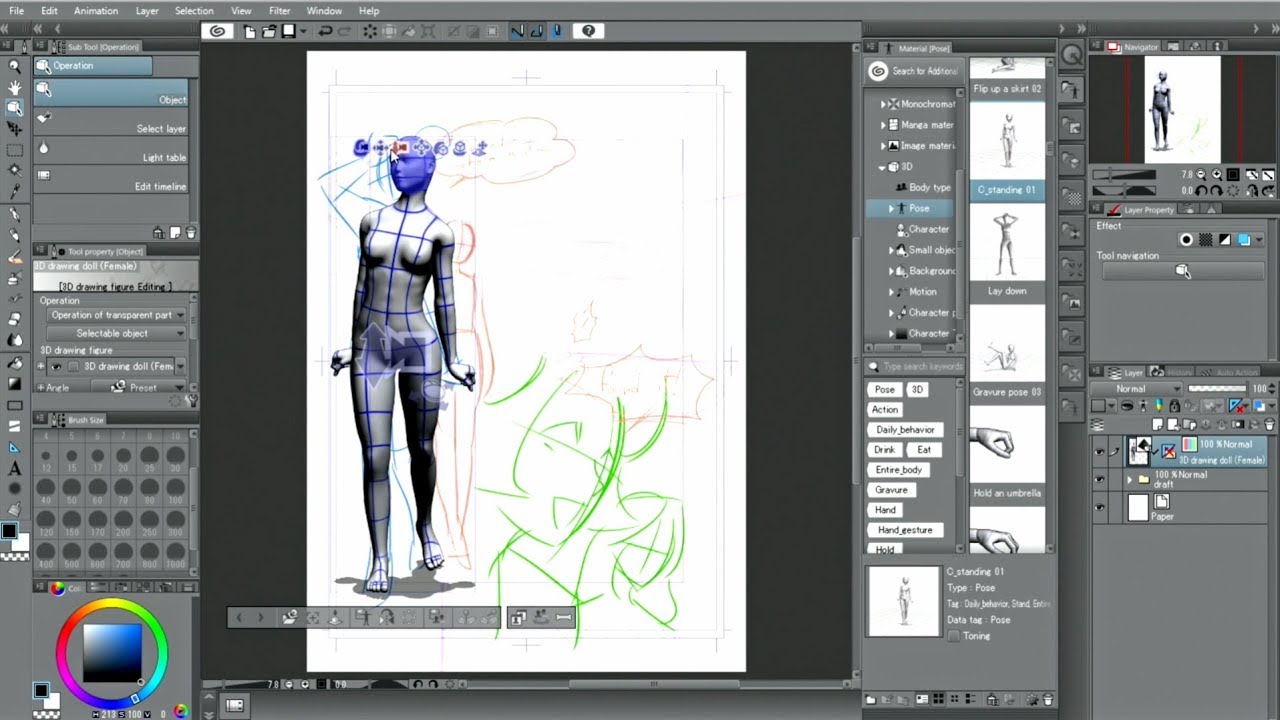

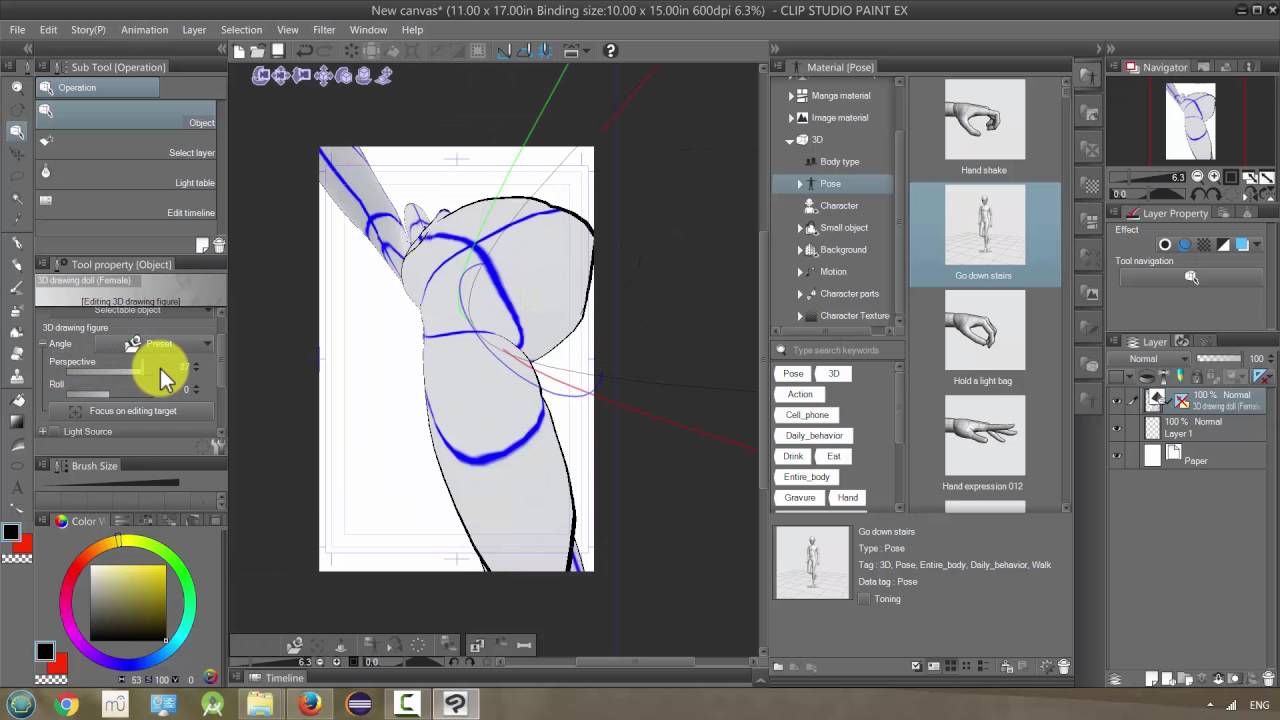

- Clip studio paint pro 3d model

- Sms gateway provider in india

- Barcode producer free

- Spiderman games on pc

- Finacle tutorial ppt

- 123 movies menace 2 society

- Slave tetris

- Large mugen roster

- Blog

- Metroid nes rom

- Xforce 3ds max 2010

- Katas de karate

- Maps alicante spain

- The simpsons arcade ps3

- Dragon fable cheat

- Ray lamontagne in concert

- Clip studio paint pro 3d model

- Sms gateway provider in india

- Barcode producer free

- Spiderman games on pc

- Finacle tutorial ppt

- 123 movies menace 2 society

- Slave tetris

- Large mugen roster

Apache Kafka Connector # Flink provides an Apache Kafka connector for reading data from and writing data to Kafka topics with exactly-once guarantees.Cloudera上的Kafka - test = TOPIC_AUTHORIZATION_FAILED.Simply call the producer function of the client to create it: const producer = kafka.producer () To publish messages to Kafka you have to create a producer.

#CLIP STUDIO PAINT PRO 3D MODEL CODE#

You can get all the Kafka messages by using the following code snippet. Open a command prompt and run the following command, kafka-console-consumer.bat -bootstrap-server localhost:9092 -topic chat-message -from-beginning. Go to your Kafka installation directory: For me, it's D:\kafka\kafka_2.12-2.2.0\bin\windows.Execute: kafka-delete-records -bootstrap-server localhost:9092 \ -offset-json-file delete-records.json. Here we've specified that for the partition 0 of the topic "my-topic" we want to delete all the records from the beginning until offset 3. You create a new replicated Kafka topic called my-example-topic, then you create a Kafka producer that uses this topic to send records.You will send records with the Kafka producer.

In this tutorial, we are going to create simple Java example that creates a Kafka producer. Kafka Tutorial: Writing a Kafka Producer in Java.This may happen for batched RPCs where some operations in the batch failed, causing the broker to respond. OPERATION_NOT_ATTEMPTED: 55: False: The broker did not attempt to execute this operation. SECURITY_DISABLED: 54: False: Security features are disabled. TRANSACTIONAL_ID_AUTHORIZATION_FAILED: 53: False: Transactional Id authorization failed.Bernardo De: Madhan Neethiraj ] Enviada em: segunda-feira, 22 de outubro de 2018 17:04 Para: Assunto: Re: Atlas and Kafka: Authorization Failed AFAIK, Kafka requires Kerberos to authenticate producers and consumers.From network errors to replication issues and even outages in downstream dependencies, services operating at a massive scale must be prepared to encounter, identify, and handle failure as gracefully as possible. In distributed systems, retries are inevitable. Building Reliable Reprocessing and Dead Letter Queues with Apache Kafka.With this kind of authentication Kafka clients and brokers talk to a central OAuth 2.0 compliant authorization server. Your Kafka clients can now use OAuth 2.0 token-based authentication when establishing a session to a Kafka broker. In Strimzi 0.14.0 we have added an additional authentication option to the standard set supported by Kafka brokers.Open this file with nano or your favorite editor: Kafka's configuration options are specified in server.properties. To modify this, you must edit the configuration file. A Kafka topic is the category, group, or feed name to which messages can be published. Kafka's default behavior will not allow you to delete a topic.Kafka offset management and handling rebalance gracefully is the most critical part of implementing appropriate Kafka consumers. Since this was an asynchronous call, so without knowing that your previous commit is waiting, you initiated another commit. It failed for some recoverable reason, and you want to retry it after few seconds.To create a consumer listening to a certain topic, we use = ) on a method in spring boot application. Let me start talking about Kafka Consumer. Kafka consumer-based application is responsible to consume events, process events, and make a call to third party API.Back in old days and old Kafka versions there used to be a simple heartbeat mechanism that was triggered when you called your poll method. So if consumer didn't contact Kafka in time then let's assume it is dead, otherwise it is still up and running and is a valid member of its consumer group.Please note that this connector should be used just for test purposes and is not suitable for production. The data consumed by Neo4j will be generated by the Kafka Connect Datagen. In this example Neo4j and Confluent will be downloaded in binary format and Neo4j Streams plugin will be set up in SINK mode.

Here in this approach when the brokers in the cluster fail to meets the producer configurations like acks and or other Kafka meta-data failures related to brokers, those events are produced to recovery or retry topic.